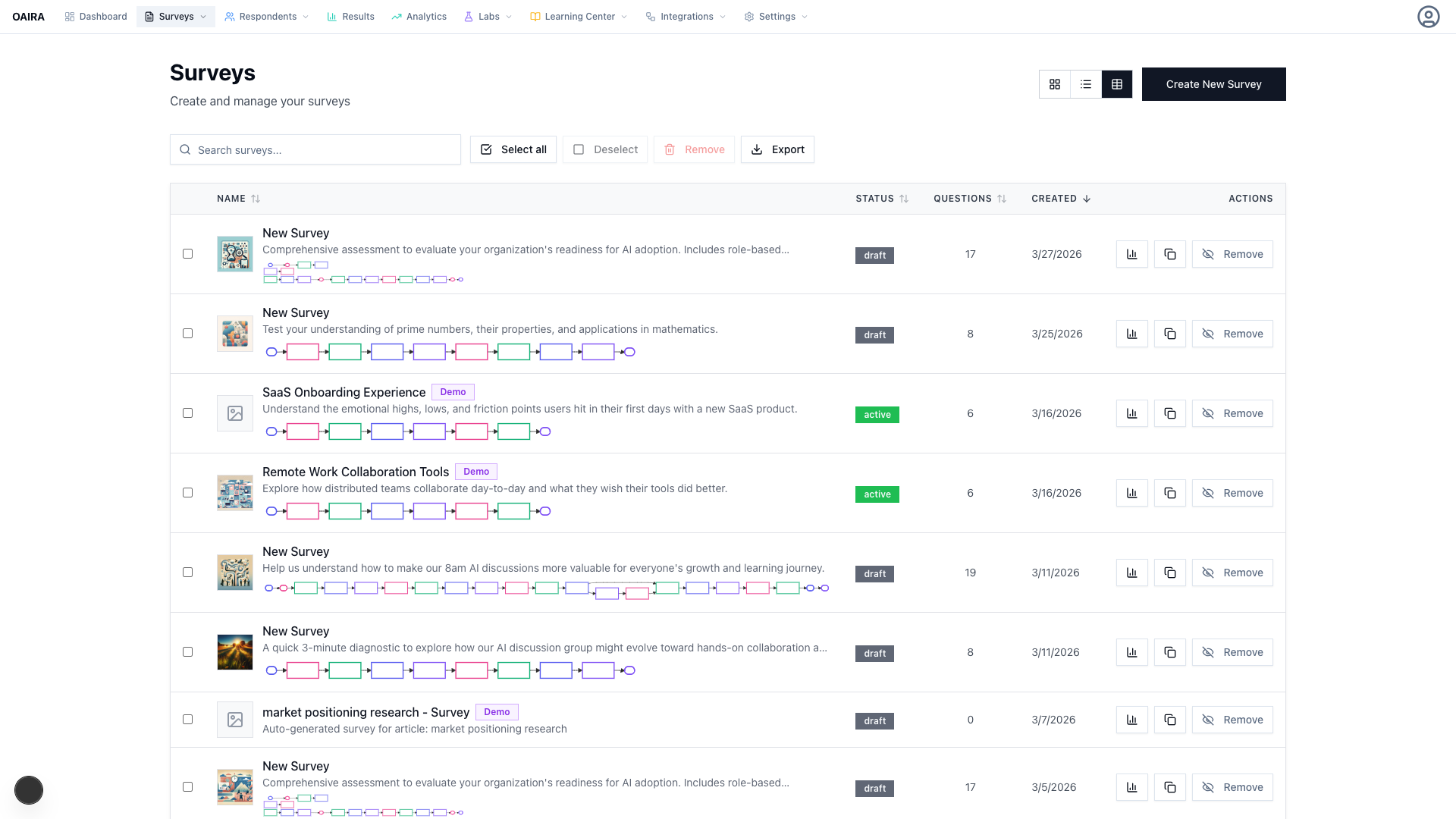

01 / 08

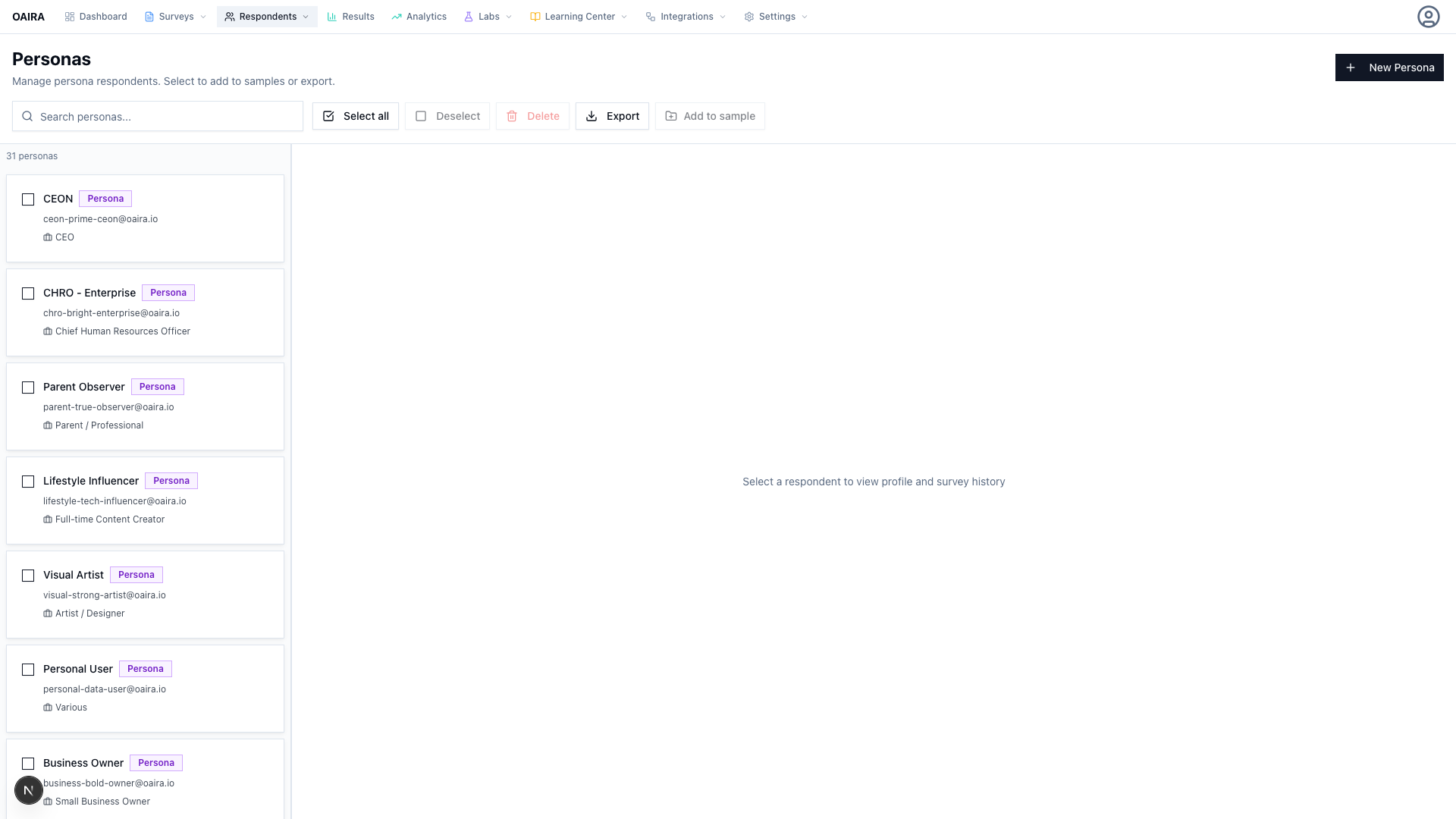

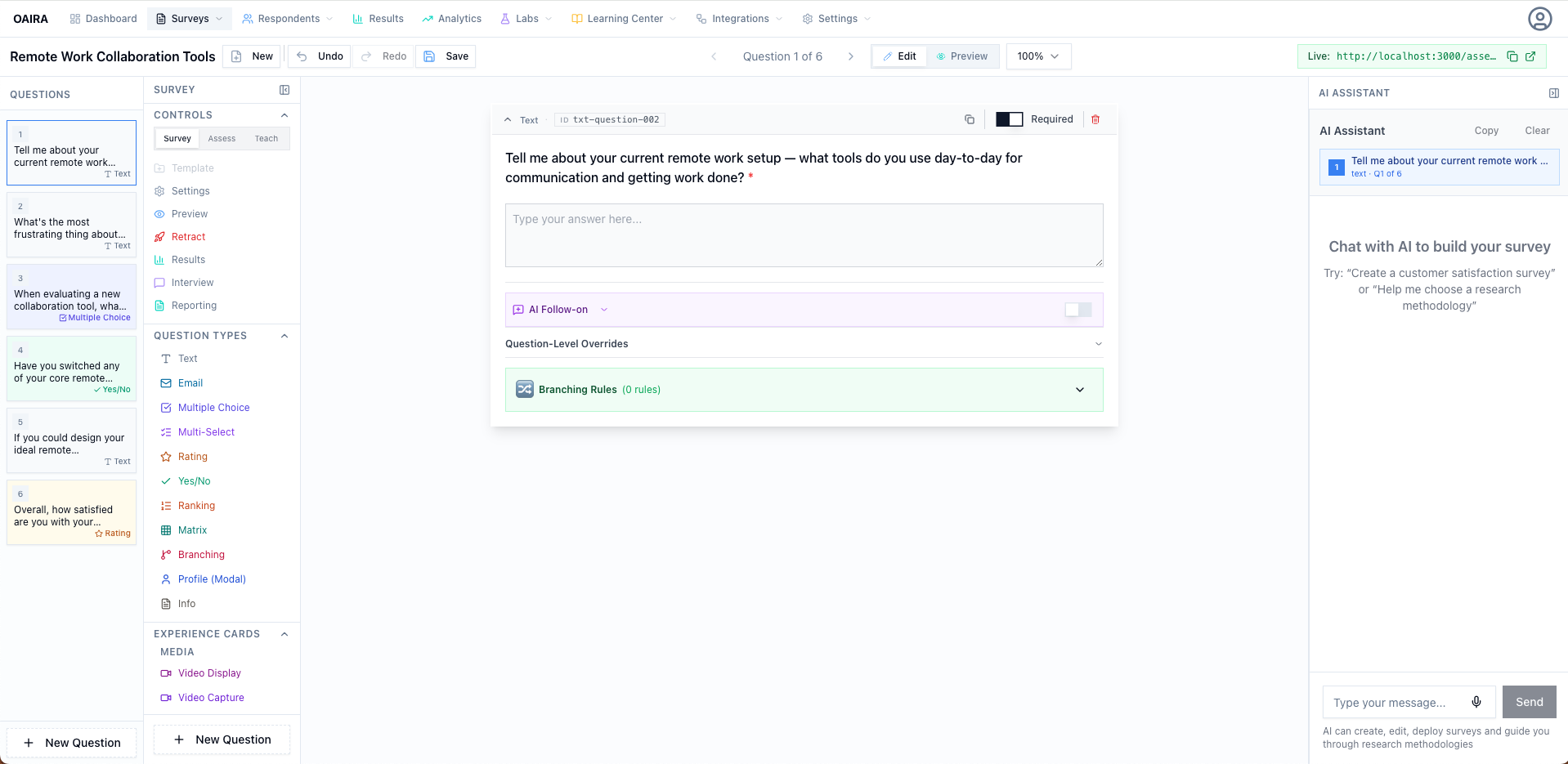

AI-Guided Survey Design

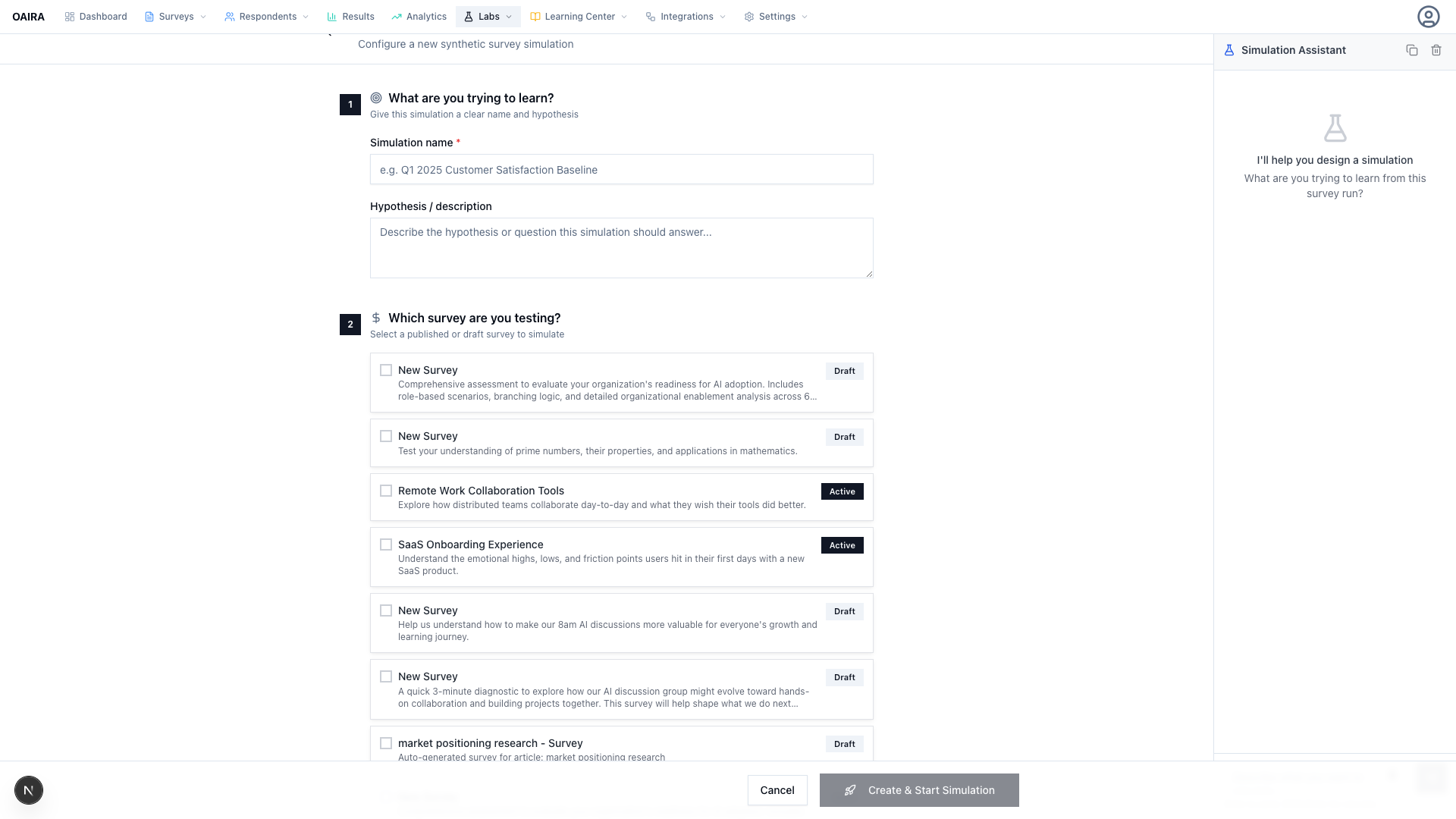

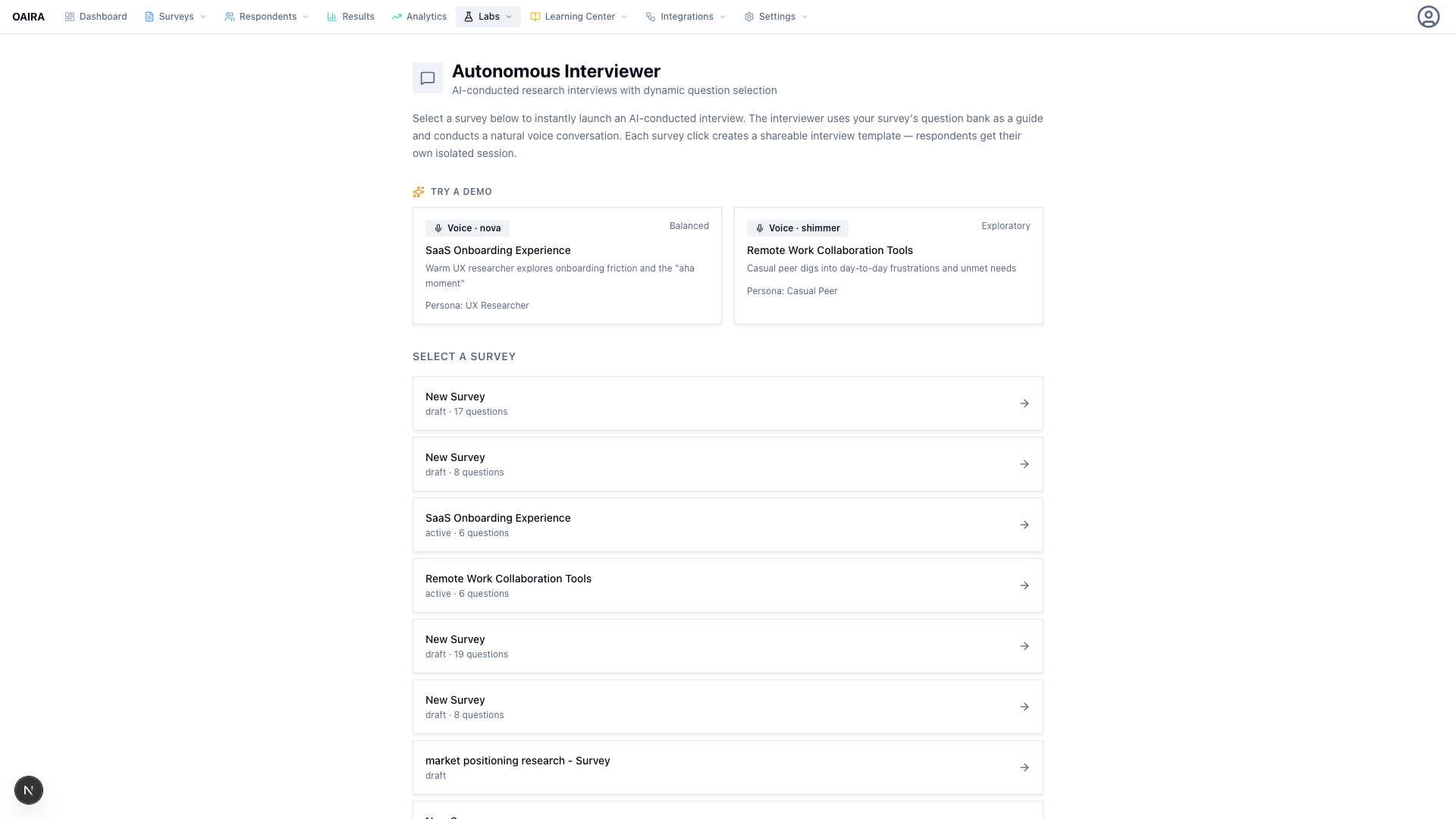

Describe your research goal. The AI recommends a methodology, walks you through guided steps, and generates a complete structured survey — with instrument design encoded as executable workflow, not advice.

8 methodologies · AI co-builder · real-time methodology scoring